Justement je suis entrain de tester l'API de Microsoft permettant de fabriquer sa propre NeuroVoice pour l'utiliser par la suite. Curieux de tester le côté "expressif".

Disney is working on making speech generation sound more emotionally expressive.

The difficulty of generating emotionally expressive voices is that one word can be enunciated in numerous ways, each of which would reveal a different emotional state of the speaker. Most speech generation models are trained to generate the most likely averaged speech, which tends to be neutral in tone.

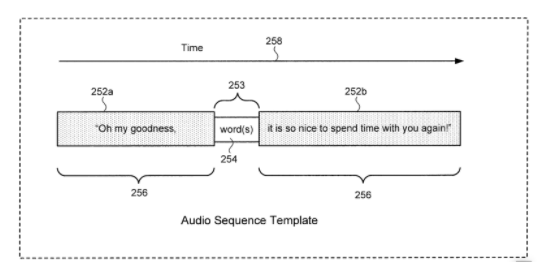

Without getting lost in the details of how Disney is essentially looking to train neural networks to generate speech that takes on the form of different emotional contexts, such as happiness, sadness, anger, fear, excitement, affection, dislike and more. As shown in the above image, there’ll be audio templates where speech will be generated to fill in the gaps, in a way that aligns of the emotional context for the whole sentence.

Why is this interesting?

In #027 PATENT DROP, we saw that Disney is working on AI role playing experiences where an AI chat bot will play as an intelligent character with kids. Moreover, in #014, we saw that Disney is looking at deploying robot actors that will interact with people in a life-like fashion.

Putting this altogether, Disney is looking to bring their characters to life as physical, embodied, intelligent, emotionally expressive creatures that people can interact with. I wouldn’t be surprised if Disney become one of the major breakout brands for consumerising robotics.

If you’re interested in this space, check out what Sonantic is doing with expressive AI voices for the game voiceover industry.

via Patent Drop : lire l’article source

Laisser un commentaire