Ce n'est pas nouveau de pouvoir interagir du réel dans le virtuel et inversement. Inquiétant de voir des patent déposé ainsi …

Facebook is looking towards creating an artificial reality system where content is presented based on what physical objects a user is interacting with.

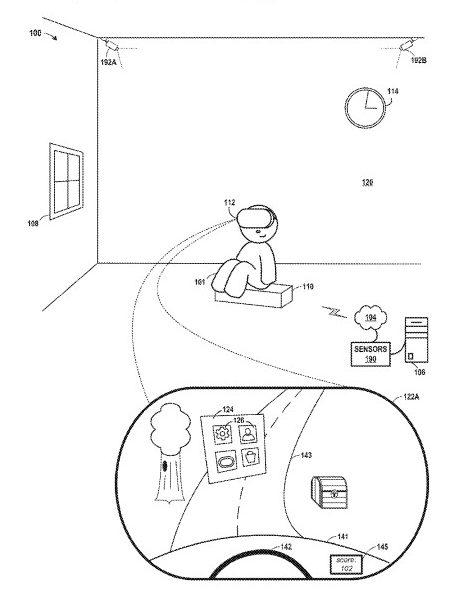

For instance, as illustrated in the diagram above, a user sitting on a chair could be a trigger to launch an artificial reality driving game. Now when the user gets up off the seat, that could be a trigger for exiting or pausing the game. In this example, using physical objects as trigger points provides a seamless way of launching and exiting from an artificial reality experience.

The content that is launched from these physical objects could either be configured, or based on other contextual information. For example, imagine if Facebook had access to your calendar and could see that you have a conference call now. When you sit down at that specific time, a VR conference call could be launched instead of a driving game.

More creepily / interestingly, Facebook’s filing also describe presenting different types of artificial reality content based on a user’s biometric information. For example, if the VR headset determines that a user is exhibiting signs of stress, a calming artificial reality experience might be played.

Why is this interesting?

One of the recurring themes in Patent Drop is the idea of the physical and digital worlds merging into one. This latest filing shows Facebook, like Snap, is actively thinking about blurring the two worlds. Although the examples in this filing are relatively minor, things become exciting when you imagine a world where all of the physical objects in our home can be used to interact with the virtual world. For instance, imagine using your real-world baseball bat to swing a virtual baseball bat in one game, and as a sword in another.

That said, there are big questions as to whether we ought to trust Facebook with all of the data it could be capturing from our bodies and our physical environments.

via Patent Drop : lire l’article source

Laisser un commentaire